New Archetypes in Generative Art

by Benji Friedman

An archetype is a pattern so fundamental that it recurs across cultures, across centuries, across media. The guardian at the gate. The tree of life. The flood. The trickster. These forms persist because they encode something deep about human experience — something that transcends any individual expression.

Generative AI is doing something unprecedented: it is creating new archetypes. Forms that have never existed in any single image but emerge from the statistical compression of billions of them. Patterns that no human artist invented but that feel immediately, viscerally recognizable.

The Model as Myth-Maker

A diffusion model trained on billions of images has, in a very real sense, seen more of human visual culture than any human ever could. It has absorbed the full breadth of our image-making — from cave paintings reproduced in textbooks to yesterday’s Instagram posts. When this model generates images, it draws on all of this simultaneously.

The result is not pastiche. It is synthesis in the deepest sense — the creation of forms that distill visual concepts across their entire history of representation. When I generate images of guardian figures in my Lamassu series, the model doesn’t just reproduce Assyrian sculpture. It produces something that carries the weight of the guardian archetype — drawing on every depiction of protective figures it has ever encountered, from ancient reliefs to superhero comics to corporate security logos.

The output is a new expression of an ancient pattern — an archetype refreshed through technological synthesis.

Lamassu series — the guardian archetype expressed through generative AI, drawing on thousands of years of protective figure imagery.

Emergent Forms

Beyond refreshing existing archetypes, generative models produce forms that are genuinely new — patterns that emerge from the data but that no individual image in the training set contains. These are what I think of as emergent archetypes: visual concepts that exist only in the latent space of the model, born from the intersection of millions of images.

You see this most clearly in low CFG outputs, where the model generates without strong prompt guidance. Certain forms recur across different seeds and different models: organic-mechanical hybrids, landscapes that are simultaneously interior and exterior, faces that resolve and dissolve at the same time. These are not things any artist has drawn before. They are new visual concepts — patterns that the model has discovered in the aggregate of human image culture.

These emergent forms feel archetypal precisely because they are derived from such a vast corpus. They are visual averages, but not in the bland sense — they are concentrated forms, distillations of visual energy from across the entire dataset.

The Plant-Person: A Case Study

My Plant People series emerged from exploring the boundary between human and botanical forms. The prompt was simple — variations on figures merged with plant life. But what the model produced went beyond illustration. It tapped into something ancient: the Green Man of medieval architecture, the dryads of Greek myth, the tree spirits of Japanese folklore, the plant deities of Mesoamerican religion.

The model had never been told about any of these traditions explicitly. But because it had absorbed images influenced by all of them, its outputs carried their collective resonance. The Plant People are not illustrations of a myth — they are a new expression of an archetype that has existed across cultures for millennia. The technology gave it a new form.

Plant People — the human-plant hybrid archetype, expressed through generative AI without explicit reference to any mythological tradition.

Patterns No One Designed

One of the most fascinating aspects of working with generative models is discovering patterns that no one put there intentionally. The training process is unsupervised in the sense that no human curated the dataset with specific visual concepts in mind. The patterns that emerge are found, not placed.

This is analogous to how archetypes work in Jungian psychology — they are not invented by any individual but emerge from the collective unconscious. The model’s latent space functions as a kind of collective visual unconscious: a compressed representation of everything humanity has depicted, from which new forms can be drawn that carry the weight of that entire history.

When I explore low CFG generation, I am essentially asking the model to free-associate — to produce whatever emerges from this compressed visual memory without the constraint of language. The recurring forms that appear across hundreds of generations are the model’s archetypes: the patterns it returns to when left to its own devices.

Technology as Archaeological Tool

There is a way to think about generative AI not as a tool for creating new images, but as a tool for excavating patterns that already exist in our visual culture but have never been made explicit. The model has seen the patterns. It has compressed them. And when we prompt it — or let it run free — it surfaces those patterns in visible form.

In this framing, the synthographer is part artist, part archaeologist. We are using technology to dig into the accumulated visual knowledge of human civilization and pull out forms that were always latent but never manifest. The Forest Mazes series, for instance, taps into something about the relationship between natural growth patterns and human-made labyrinths — a connection that exists across cultures but that I never could have illustrated manually with the same density and strangeness that the model produces.

Forest Mazes — the intersection of organic growth and labyrinthine structure, an emergent pattern from generative AI.

The Feedback Loop

Something interesting is happening as AI-generated images enter the broader visual culture. These new forms — these emergent archetypes — are being seen, shared, remixed, and absorbed back into the cultural stream. Future models trained on future data will include AI-generated images in their training sets. The archetypes will compound.

This creates a feedback loop between human visual culture and machine synthesis. We make images that train models that make images that influence humans that make images. The archetypes that emerge from this loop will be genuinely hybrid — neither purely human nor purely machine, but something new born from the interaction.

We are at the beginning of this process. The first generation of AI-native archetypes is just now entering the cultural vocabulary. In a decade, some of these forms will feel as natural and inevitable as the archetypes that have persisted for thousands of years. They will have always been there — because in a sense, they were. The technology just made them visible.

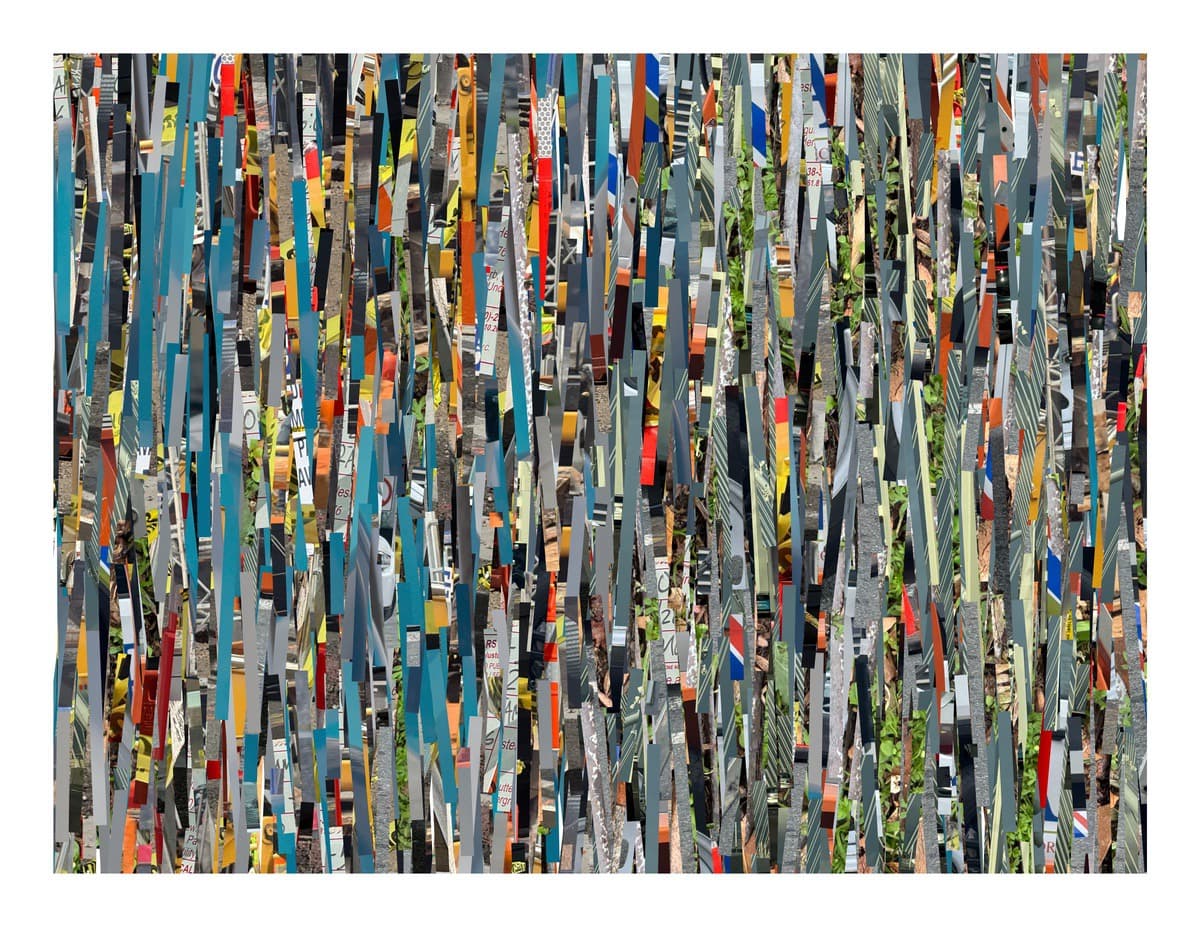

The technology-nature hybrid — another emergent archetype, where organic life and technological form merge into something neither.

Flower Trash — beauty emerging from decay, an ancient pattern given new form through AI synthesis.

Explore the series referenced in this article: Lamassu · Plant People · Forest Mazes · Low CFG 2025 · Flower Trash · Behance